Iclr2020: Compression based bound for non-compressed network

By A Mystery Man Writer

Iclr2020: Compression based bound for non-compressed network: unified generalization error analysis of large compressible deep neural network - Download as a PDF or view online for free

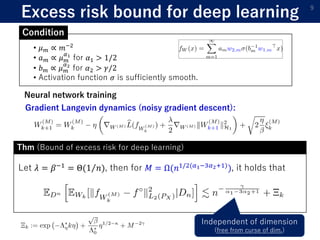

1) The document presents a new compression-based bound for analyzing the generalization error of large deep neural networks, even when the networks are not explicitly compressed.

2) It shows that if a trained network's weights and covariance matrices exhibit low-rank properties, then the network has a small intrinsic dimensionality and can be efficiently compressed.

3) This allows deriving a tighter generalization bound than existing approaches, providing insight into why overparameterized networks generalize well despite having more parameters than training examples.

ICLR2021 (spotlight)] Benefit of deep learning with non-convex noisy gradient descent

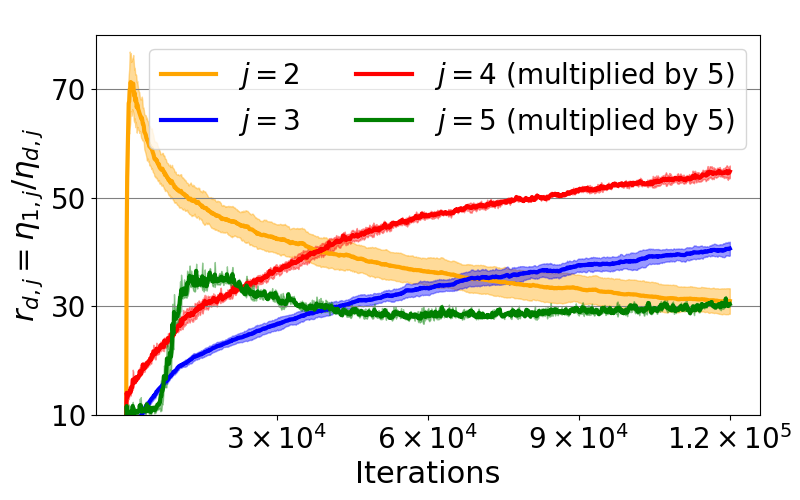

Discrete MRF Inference of Marginal Densities for Non-uniformly Discretized Variable Space

PAC-Bayesian Bound for Gaussian Process Regression and Multiple Kernel Additive Model

Minimax optimal alternating minimization \ for kernel nonparametric tensor learning

Koopman-based generalization bound: New aspect for full-rank weights

JSAI 2021 4G2-GS-2k-05 Homogeneous responsive activation function Yamatani Activation and application to single-image super-resolution

Learning group variational inference

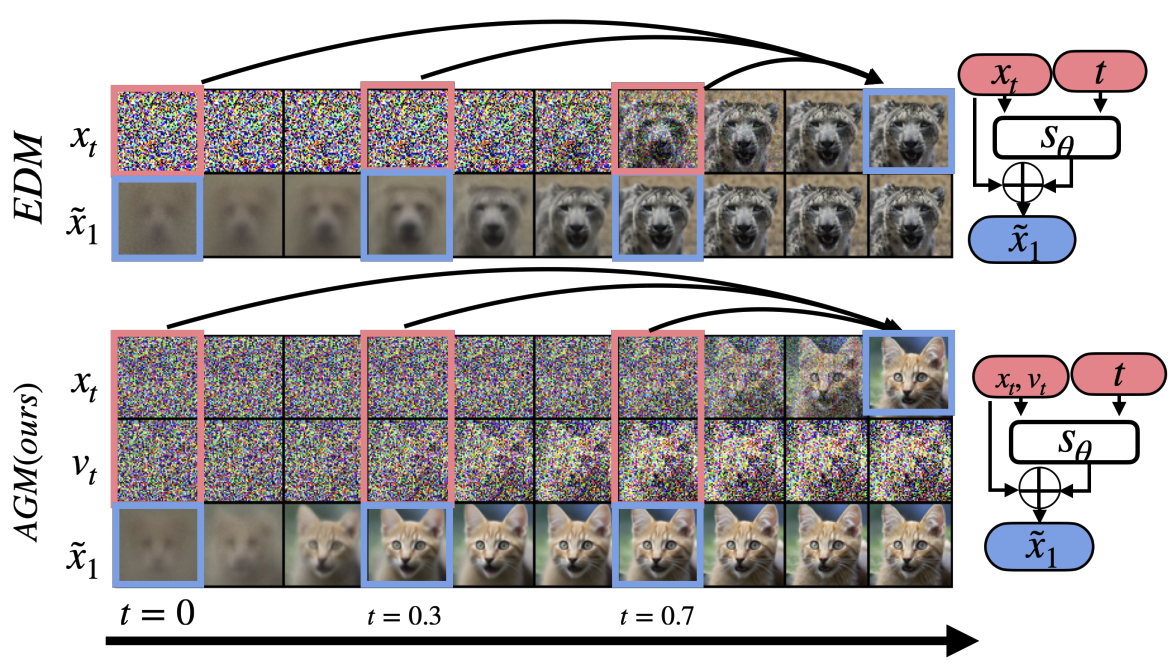

Publications - Jiatao Gu

ICLR 2020

Conference Proceedings - CECS

How does unlabeled data improve generalization in self training

Discrete MRF Inference of Marginal Densities for Non-uniformly Discretized Variable Space

Co-clustering of multi-view datasets: a parallelizable approach

Meta-Learning with Implicit Gradients

Binary Neural Architecture Search